Enterprise-Grade Speech Emotion Recognition API

Detect emotion from voice, not just words.

Detect emotion from voice with high precision. FacialProof’s Speech Emotion Recognition API analyzes tone, pitch, prosody, and vocal stress to reveal real human sentiment in real time or from recorded audio, without relying on transcripts alone.

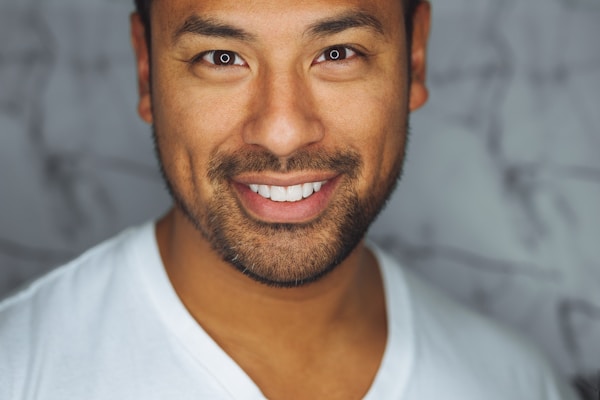

👁️ Facial Recognition

Detect faces in real-time using your camera

Reference

Results

{

"success": true,

"faces_detected": 3,

"faces": [

{

"id": 1,

"confidence": 0.95,

"bounding_box": {"x": 150, "y": 200, "width": 300, "height": 350},

"age": "27-29 years old",

"emotion": "Happy",

"gender": "Female"

},

{

"id": 2,

"confidence": 0.92,

"bounding_box": {"x": 500, "y": 180, "width": 280, "height": 320},

"age": "25-27 years old",

"emotion": "Neutral",

"gender": "Male"

},

{

"id": 3,

"confidence": 0.88,

"bounding_box": {"x": 800, "y": 250, "width": 200, "height": 250},

"age": "6-8 years old",

"emotion": "Happy",

"gender": "Male"

}

]

}

Live Camera

ID Document

🎭 Facial Emotion Recognition

Upload an image or use real-time camera for face shape analysis using advanced AI

Face Shape

Personal Attributes

Face Measurements

Detection Info

🎤 Advanced Voice Emotion Detector

AI-Powered Real-Time Emotion Analysis

Real-time and batch audio processing • Built for call centers, AI voice agents, and analytics platforms

Turn Voice Signals into Actionable Emotional Intelligence

Words don’t tell the full story. FacialProof converts raw speech signals into structured emotional data your systems can act on instantly. from live conversations to large-scale audio archives.

Real-Time Voice Emotion Analysis

Analyze live audio streams with sub-50ms latency. Detect emotional shifts during calls as they happen, ideal for agent assist, IVR, and AI voice systems.

Prosody & Vocal Stress Detection

Capture emotion through pitch variation, tempo, pauses, and energy, even when transcripts appear neutral.

Noise-Resilient Audio Processing

Designed for VoIP, mobile calls, and noisy environments. Models focus on speaker affect, not background artifacts.

Build emotionally intelligent voice systems with FacialProof’s Speech Emotion Recognition API.

Advanced Speech Emotion Recognition, Built for Production

FacialProof’s affective computing models are trained to handle real-world audio conditions at scale, not lab-grade recordings.

Real-Time Emotion & Mood Inference

Emotion probability scores per time segment

Detect anger, frustration, joy, fear, sadness, neutrality

Continuous emotional tracking during calls

Audio Streaming API Batch Speech Emotion Analysis

Analyze live audio via WebSocket or WebRTC with sub-50ms latency. Built to scale from single sessions to thousands of concurrent voice streams without performance loss.

Upload thousands of call recordings for historical sentiment analysis and quality assurance (QA).

High-Resolution Emotional Metrics

Access detailed emotion curves with confidence scoring. Metrics are structured for easy use in dashboards, real-time alerts, and automated decision systems.

Call Centers & Customer Support

Detect frustration, escalation risk, and empathy gaps in real time. Trigger supervisor alerts or post-call QA scoring automatically.

Conversational AI & Voice Assistants

Give voice bots emotional awareness. Route users to humans when frustration or confusion is detected.

Healthcare & Mental Wellness

Track vocal biomarkers related to stress, anxiety, and emotional fatigue across sessions, without facial data.

Why FacialProof for Speech Emotion Recognition

What the API Actually Measures

Most “emotion APIs” rely on:

- Text sentiment only

- Limited emotional classes

- High latency batch processing

- Audio-native affective computing

- Multilingual speech emotion models

Developer-First Speech Emotion Recognition API

We built the Voice Emotion Detection SDK to be plug-and-play. Whether you need a speech emotion recognition SDK for a mobile app or a high-throughput REST API for your cloud backend, we have you covered.

import voice_emotion_api

# Connect to the stream

client = voice_emotion_api.Client(api_key="your_key")

stream = client.connect_stream()

# Analyze live audio chunk

result = stream.analyze_prosody(audio_chunk)

if result.emotion == "anger" and result.confidence > 0.85:

alert_supervisor(result.timestamp)FacialProof vs Other Emotion Recognition APIs

Are you looking for an Azure Speech Emotion Recognition API replacement

Many big-tech providers have restricted or deprecated their public emotion detection endpoints. We offer a dedicated, privacy-first alternative.

Compare FacialProof with platforms such as Microsoft Azure, Google Cloud, Amazon, Hume AI, and AssemblyAI.

| Feature | Our Voice Emotion API | Azure / Amazon / Google | Hume AI / AssemblyAI |

|---|---|---|---|

| Emotion Granularity | 24+ Emotional States | Basic Sentiment (Pos/Neg) | High Granularity |

| Latency | Real-Time (<50ms) | Batch / Slow | Real-Time |

| Privacy Policy | Stateless (No Storage) | Data often retained | Varies |

| Developer Cost | Generous Free Tier | Enterprise Contracts | High Start-up Cost |

| Audio Clarity | Advanced Noise Filtering | Standard | High Accuracy |

FAQs

Is there a free Speech Emotion Recognition API?

Yes. FacialProof offers a free tier with monthly usage limits, ideal for testing, MVPs, and research.

Does Speech Emotion Recognition API work in real time?

Yes. The API supports low-latency streaming and returns emotion frames continuously during live audio.

Is this a replacement for Microsoft Emotion Recognition APIs?

Yes. As Microsoft and other providers retired or restricted emotion detection endpoints, FacialProof provides a focused, audio-only alternative.

Which languages are supported?

English, Spanish, French, German, and Mandarin, with more in progress.